Google Jumps Back Into the Open Source AI Race With Gemma 4

In brief

- Google dropped Gemma 4, a family of open models under the Apache 2.0 license.

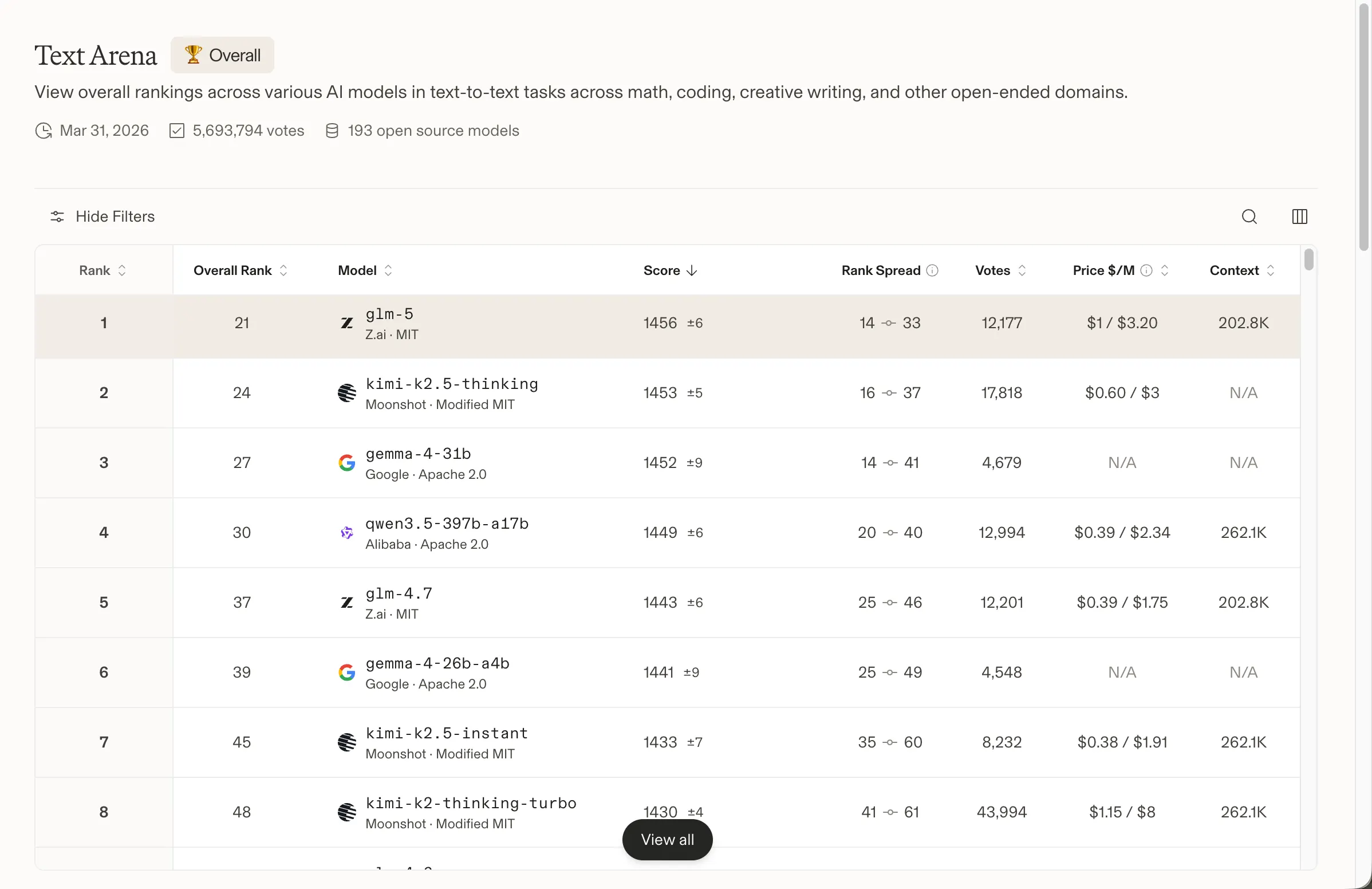

- The four-model lineup spans phones to data centers with the 31B model ranking #3 globally already.

- U.S. open-source AI gets a needed boost, as Gemma 4—backed by DeepMind—positions itself as the strongest American contender against DeepSeek, Qwen, and other Chinese leaders.

Google’s open AI ambitions got a lot more serious today. The company released Gemma 4, a family of four open-weight models built on the same research as Gemini 3, and licensed under Apache 2.0—a significant departure from the more restrictive terms on previous Gemma versions. Developers have downloaded past Gemma generations over 400 million times, spawning more than 100,000 community variants. This release is the most ambitious one yet.

We just released Gemma 4 — our most intelligent open models to date.

Built from the same world-class research as Gemini 3, Gemma 4 brings breakthrough intelligence directly to your own hardware for advanced reasoning and agentic workflows.

Released under a commercially… pic.twitter.com/W6Tvj9CuHW

— Google (@Google) April 2, 2026

For the past year, the open-source AI leaderboard has been largely a Chinese affair. DeepSeek, Minimax, GLM and Qwen have dominated the top spots, leaving American alternatives scrambling for relevance. As Decrypt reported last year, Chinese open models went from barely 1.2% of global open-model usage in late 2024 to roughly 30% by the end of 2025, with Alibaba’s Qwen even overtaking Meta’s Llama as the most-used self-hosted model worldwide. Meta’s Llama used to be the default choice for developers who wanted a capable, locally runnable model. That reputation has eroded—Llama’s Meta-controlled license raised questions about its true open-source status, and its performance slipped behind the Chinese competition. The Allen Institute’s OLMo family tried to fill the gap but failed to gain meaningful traction. OpenAI released its gpt-oss models in August 2025, which gave the ecosystem a breath of fresh air, but they were never designed to be frontier competitors. And yesterday, a 30-person U.S. startup called Arcee AI released Trinity, a 400 billion parameter open model that made a compelling case that the American scene wasn’t completely dead. Gemma 4 follows that momentum, this time with the full weight of Google DeepMind behind it, turning it into arguably the best American model in the open-source AI scene. The model is “built from the same world-class research and technology as Gemini 3,” Google said in its announcement. Gemma 4 ships in four sizes: Effective 2B and 4B for phones and edge devices, a 26B Mixture of Experts model focused on speed, and a 31B Dense model optimized for raw quality.

The 31B Dense currently ranks third among all open models on Arena AI’s text leaderboard. The 26B MoE sits sixth. Google claims both outcompete models 20 times their size—a claim that holds up, at least against the Arena AI numbers, where Chinese models still hold the top two spots.

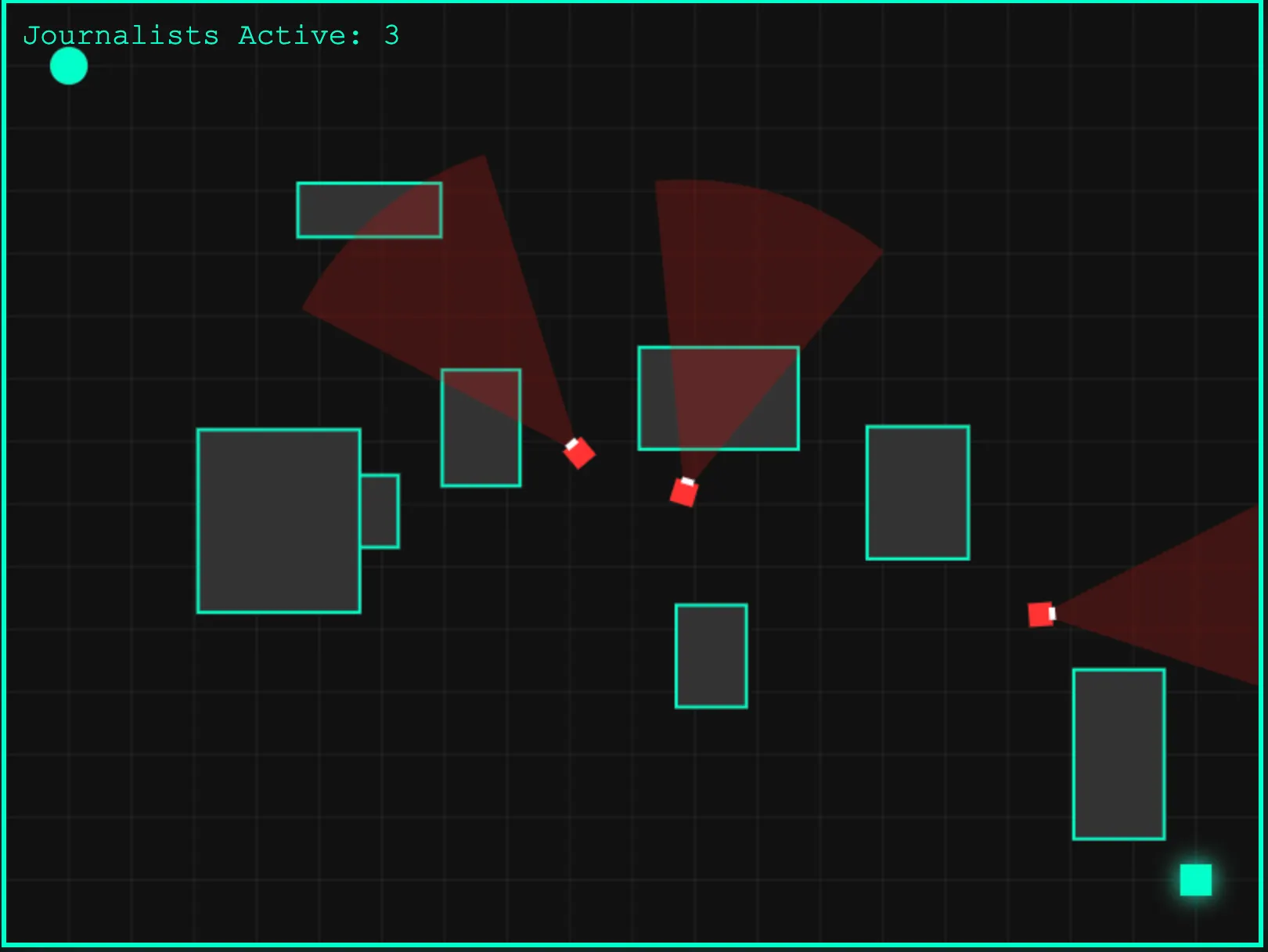

We tested Gemma 4. It’s capable, with some caveats. The model applies reasoning even to tasks that don’t require it, which can make responses feel over-engineered for simple prompts. Creative writing is decent—serviceable, not inspired—and likely improves with more specific guidance and prompt engineering. Where it delivered most clearly was code. Asked to generate a game, the output wasn’t particularly flashy or elaborate, but it ran without errors on the first try. Not bad for a 41 billion parameter model. That zero-shot reliability is arguably more valuable than a prettier result that needs debugging. You can try the (basic, yet functional) game here.

The four variants cover the full hardware spectrum. The E2B and E4B models are built for Android phones, Raspberry Pi, and edge devices, running completely offline with near-zero latency, native audio input, and a 128K context window. The 26B and 31B models target workstations and cloud deployments, extending context to 256K and adding native function-calling and structured JSON output for building autonomous agents. All four models process images and video natively. The larger models’ full-precision weights fit on a single 80GB NVIDIA H100 GPU; quantized versions run on consumer hardware. The Apache 2.0 license is the other headline. Google’s previous Gemma releases used a custom license that created legal ambiguity for commercial products. Apache 2.0 removes that friction entirely—developers can modify, redistribute, and commercialize without worrying about Google changing the terms later. Hugging Face co-founder Clement Delangue praised it, saying that “Local AI is having its moment,” and it is the future of the AI industry. Google DeepMind CEO Demis Hassabis went further, calling Gemma 4 “the best open models in the world for their respective sizes.”

Excited to launch Gemma 4: the best open models in the world for their respective sizes. Available in 4 sizes that can be fine-tuned for your specific task: 31B dense for great raw performance, 26B MoE for low latency, and effective 2B & 4B for edge device use - happy building! pic.twitter.com/Sjbe3ph8xr

— Demis Hassabis (@demishassabis) April 2, 2026

That’s a strong claim. Proprietary systems from Anthropic, OpenAI, and Google’s own Gemini still lead on the hardest benchmarks. But for open-weight models you can run locally, modify freely, and deploy on your own infrastructure? The competition just got significantly thinner. You can try Gemma 4 now in Google AI Studio (31B and 26B) or Google AI Edge Gallery (E2B and E4B). Model weights are also available on Hugging Face, Kaggle, and Ollama.